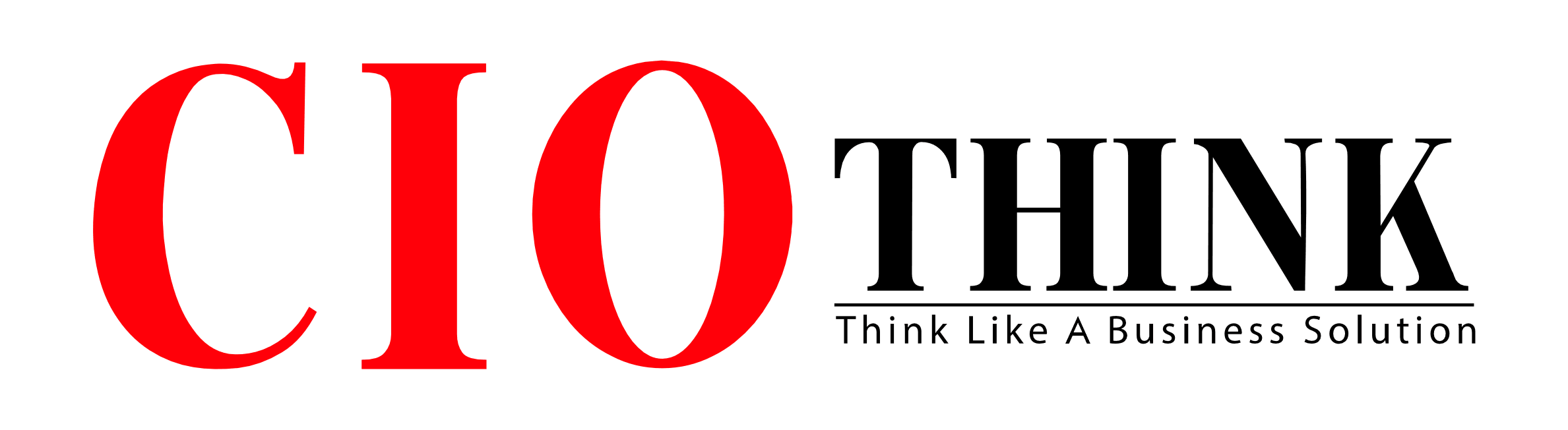

NVIDIA has introduced a breakthrough localized data center architecture designed to enable cities to process artificial intelligence (AI) workloads locally, reducing dependence on large centralized global server farms. The announcement, led by Jensen Huang, marks a significant shift in how AI infrastructure may be deployed in urban environments.

The new model focuses on bringing high-performance computing closer to where data is generated, allowing real-time processing within city-level infrastructure. This approach is expected to reshape enterprise AI adoption and urban digital ecosystems.

Local AI Processing for Smart Cities

The core idea behind the NVIDIA localized data center architecture is to decentralize AI computing power. Instead of routing massive datasets to distant hyperscale data centers, cities can now process information locally.

This enables faster decision-making for applications such as traffic management, public safety systems, smart utilities, and autonomous mobility. By reducing data travel distance, latency is significantly minimized, improving overall system responsiveness.

Addressing Data Privacy and Latency Challenges

One of the key drivers behind this innovation is the growing concern over data privacy and cross-border data transfer regulations. By keeping sensitive data within city boundaries, organizations can better comply with local governance and privacy laws.

At the same time, latency issues—often caused by reliance on distant cloud infrastructure—are reduced. This is particularly critical for time-sensitive AI workloads such as healthcare diagnostics, financial analytics, and real-time surveillance systems.

Why This Is Trending Among CIOs

Chief Information Officers (CIOs) are closely watching NVIDIA’s move as it directly addresses two major enterprise challenges: data security and operational efficiency.

Localized infrastructure reduces dependency on global cloud networks while improving performance reliability. For many enterprises, this could represent a hybrid future where centralized and localized AI systems work together.

The NVIDIA localized data center architecture is also seen as a strategic response to the increasing demand for edge AI capabilities across industries.

The Future of Distributed AI Infrastructure

Industry experts believe this announcement could accelerate the shift toward distributed AI ecosystems, where computing power is spread across cities rather than concentrated in a few global hubs.

In the coming years, more governments and enterprises may adopt localized AI frameworks to enhance digital sovereignty and infrastructure resilience.

As NVIDIA continues to push the boundaries of AI infrastructure design, the broader tech industry is likely to follow, potentially redefining how global AI systems are built and deployed.

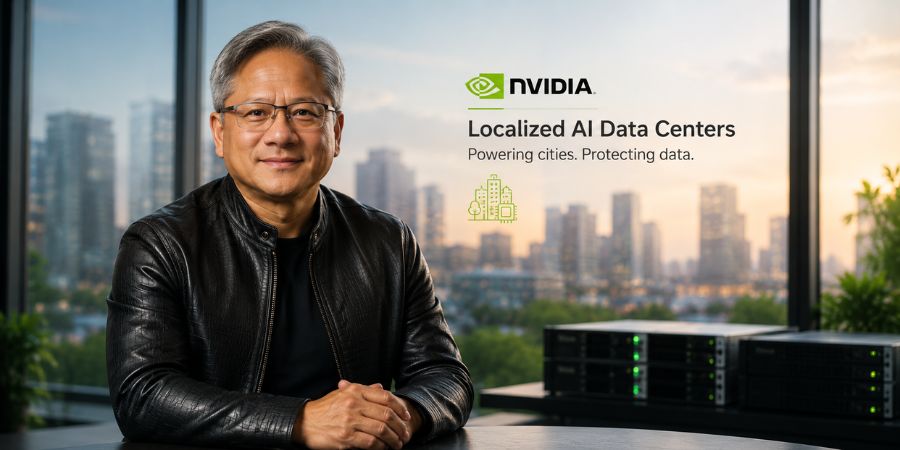

Read Also: Ted Turner CNN Founder Death May 2026 Sparks Global Tribute